AI Research

& Innovation

Exploring the frontiers of machine learning, neural networks, and artificial intelligence through rigorous research and interactive demonstrations.

Research Domains

Dive into our research across multiple domains, from theoretical foundations to practical applications.

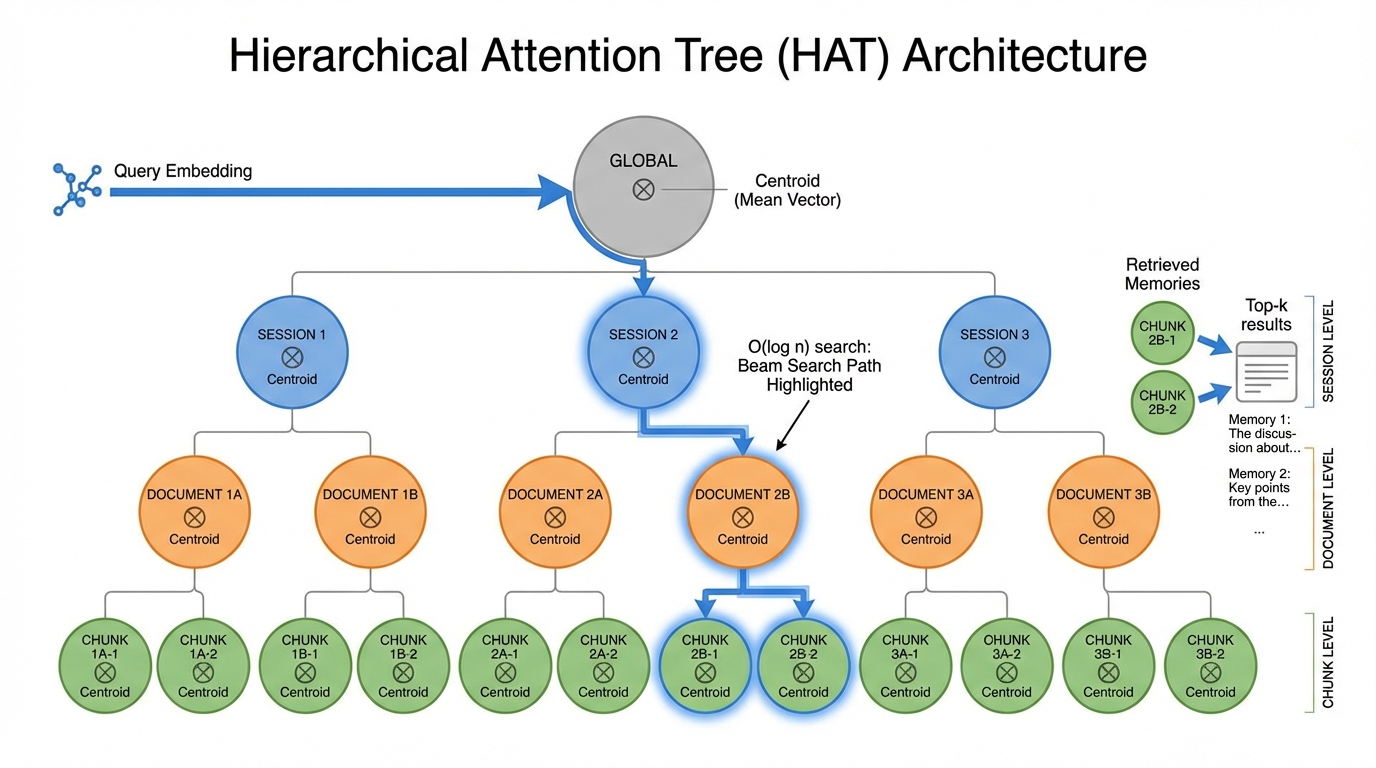

HAT

Hierarchical Attention Tree - A new spatial-hierarchical database model

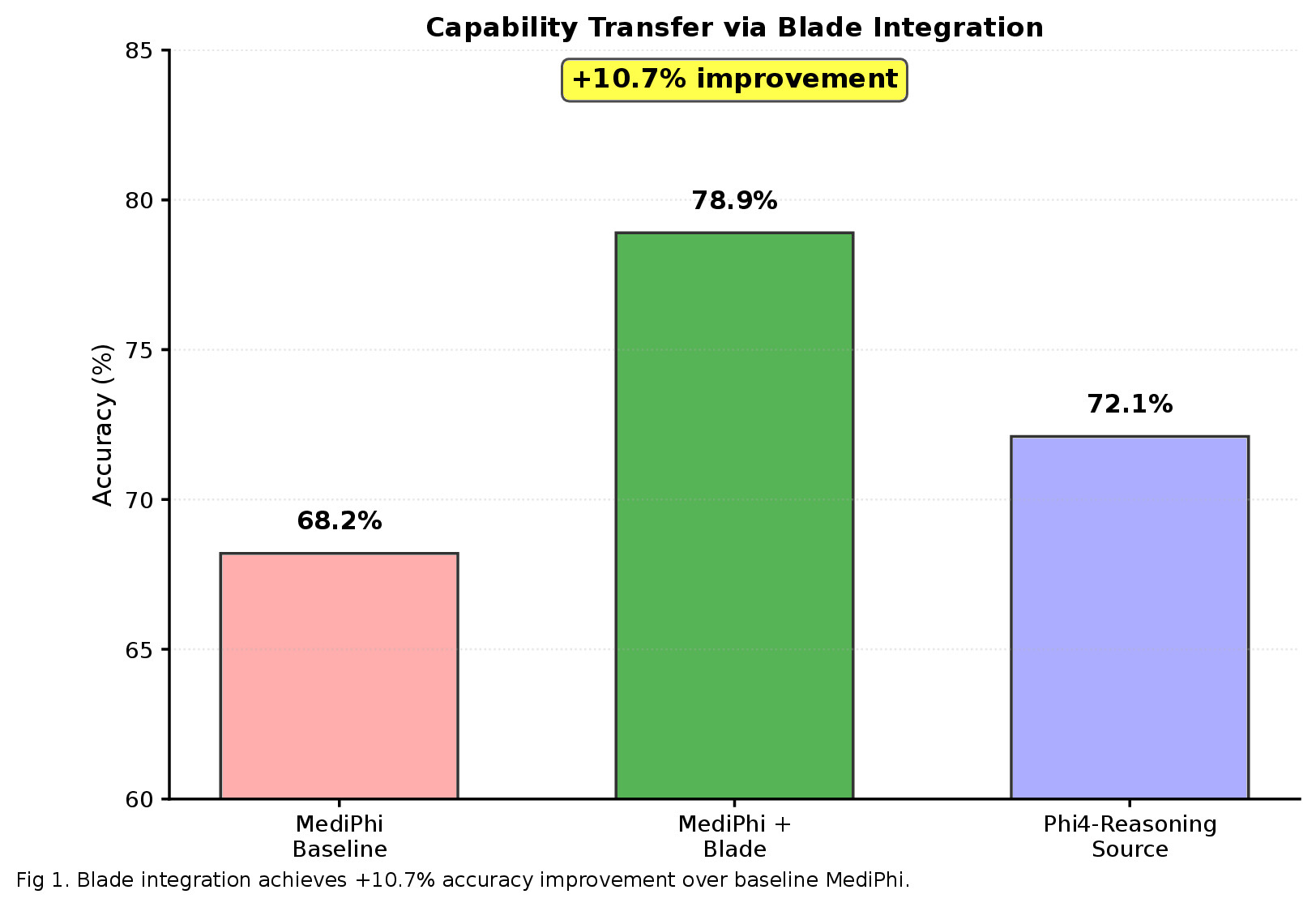

→Blades

Hot-swappable capability injection through hidden state transfer

→Journals

Research publications on AI memory, model composition, and inference

→Labs

Interactive experiments and research environments

→Thoughts

Essays, insights, and reflections on AI research

→Featured Research

Our latest and most impactful work

HAT: Hierarchical Attention Tree

A novel spatial-hierarchical database achieving 100% recall at 70x faster build times than HNSW.

Blades: Capability Enhancement

Hot-swappable capability injection through hidden state transfer between specialized models.

ARMS: Attention Reasoning Memory Store

A spatial memory architecture that caches attention states at their coordinates.

Position IS Relationship

Traditional databases need explicit relationships. Vector databases lose structure entirely. HAT preserves both structural relationships AND semantic proximity.

- Multi-resolution queries at session, document, or chunk level

- Structural priors as indices - exploit known hierarchy

- Incremental centroid maintenance without full recomputation

- Sleep-inspired consolidation for index maintenance

Ready to Explore?

Join researchers worldwide in pushing the boundaries of artificial intelligence. All our research is open and accessible.